Pierre Huyghe’s sculpture Exomind hunches down in the tranquil garden, and whether coming or going, she offers a quiet greeting or good-bye to those who visit Uncanny Valley: Being Human in the Age of AI, opening February 22, 2020, at the de Young Museum. The figure topped by a live beehive and kneeling in harmony with surrounding flora and fauna, embodies knowledge and communication. Inside the exhibition, Japanese engineer Masahiro Mori’s concept of the “uncanny valley” as a metaphor for the relationship between humans and autonomous machines, is examined by a group of international aural and visual artists. Just as the beehive is an exo-mind in everlasting formation and endless modifications, so is the brain, and so is the theory of swarm intelligence. I spoke with Claudia Schmuckli, Curator in Charge of Contemporary Art at the Fine Arts Museums of San Francisco who organized the show. The subject is overwhelming but as children’s author Laura Roberts writes in Arrow’s Adventure, “Keep moving through the valleys to find your sight.”

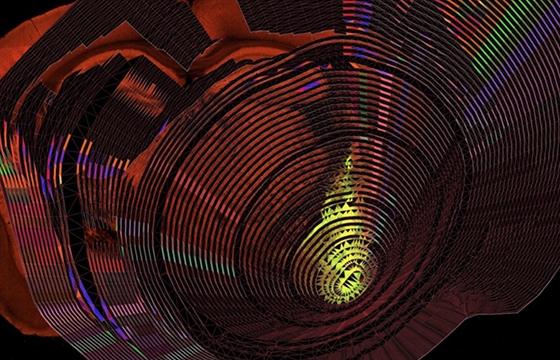

When scientists gave psychedelic drugs to spiders, the arachnids went off grid and asymmetrical to create webs gone wild. The spidergram was developed as a visual tool by the data-mining firm Palantir to organize, extract and exploit human behavioral data. Through the format of complex collage, the Zairja Collective is an exciting addition to the de Young Museum’s current show Uncanny Valley. In the creation of wire-frame designs, they explore the functionality and manipulation of data mining, and what a mighty web they weave, enticing us to consider the precarious potential of corporate insinuation. Take another trip to Uncanny Valley and the labyrinthine designs of the Zairja Collective.

Neuropit #14 (spidergram / visual cortex neuron), from the series Neuropit, 2016–present, The Zairja Collective (est. 2015, San Francisco Bay Area) Collage of wire-frame design for the geo-modeling of existing mining open pits and visual cortex neuron of a mouse Courtesy of the artist

Gwynned Vitello: Between Masahiro Ito’s description of Uncanny Valley as the ambivalent relationship between humans and robots and Anna Weiner’s book of the same name, the term Uncanny Valley conjures up various distinct reactions. How long have you been marinating this idea, and did proximity to Silicon Valley naturally spur you?

Claudia Schmuckli: Moving to San Francisco most definitely accelerated the impetus to do this exhibition. I was astounded by the fact that the subject had yet to receive serious recognition by a major cultural institution in the US, and being so close to the Valley, I became even more aware of the divide between the art and tech worlds. This exhibition hopes to be a catalyst for a more active and engaged dialogue.

Before conceiving the idea for Uncanny Valley: Being Human in the Age of AI, I was focused on the idea that forms of artificial intelligence and artificial life were going to merge in ways not yet imagined. I am neither a techno-optimist nor pessimist, yet it seemed apparent that this intersection would be a survivalist tool for responding to climate change and the distressing prospect of having to seek refuge on other planets. But as I began researching artists working at this intersection, the world was reconfigured by algorithmic psy-ops that turned elections by feeding off people’s tribal reflexes to propagate nationalism and its systems of cogs. Extremist and discriminatory tropes thought to be buried in history re-emerged as a partisan body – an ideological Frankenstein – floating to the surface of the political landscape like the monstrous island of plastic in the Pacific Ocean.

Even before the 2016 US presidential election and Brexit, it had become clear that this development was driven by statistical machines, today simply called artificial intelligence, or AI, a type of machine intelligence that has traditionally been applied to decrease uncertainty in fields as diverse as climate science, healthcare, and gaming – areas that benefit from the prediction of potential risks. But introduced into the social sphere, the predictive mechanisms of AI have instead wreaked havoc and augmented uncertainty and instability. In doing so, they’ve triggered the resurgence of a male “hero” driven by anger and thirst for recognition – a pseudo Achilles of Greek mythology, yet stripped of the tragic appeal that made him legend.

But how had AI become so effective at reshaping reality? This question led me to redirect my focus from the future intersection of AI and artificial life to the forms in which AI is operative today. Given the important role of social media, e-commerce sites and search engines in this landscape, I began to think about how the problem of AI is inherently visual, as our primary engagement with it is through images. I realized that the way we “see” AI seemed to magnify early avant-garde visual strategies, in particular, montage.

How is AI reshaping our idea of the machine, as well as how have its applied mechanisms expanded on aesthetic concepts that have guided and influenced our understanding of the human-machine relationship? The show looks at the uncanny, a fundamentally 20th-century psychoanalytic idea, and the uncanny valley – a term proposed by robotics expert Masahiro Mori in 1970 to describe human reactions to life-like machines. I consider the dueling definitions of the uncanny put forth by Ernst Jentsch and Sigmund Freud to propose a different definition, one that goes beyond Mori’s imagination of the robotic double. This reconfigured Uncanny Valley incorporates AI imaginary – the trove of images and symbols derived from the metaphors that guide and describe the design, operations, and applications of AI. Ranging from models of collective intelligence to forms of excavation and statistical alter-egos, they define a visual language of AI from within.

I think, then, that Stephanie Dinkins’s video still Conversations with Bina48 is a good image to start with...

It’s one we consciously chose because it’s this encounter between the artist and a sophisticated AI programmed social robot, right? The relationship between humans and machines is really the subject of the exhibition.

Ha, We’re morphing! We wear machines and talk to them.

Although the history of AI goes back to the 50s, the way it is operative in the world right now is recent, only going back to the 2000s. We are really talking about a new reality that is being created by AI, and my interest is to examine, on the one hand, how AI is shaping our engagement as humans with machines, and on the other hand, how AI is redefining concepts that have guided how we have thought of that relationship for most of the 20th century. The human-machine relationship has a very long history, basically back to antiquity.

Well, this is beyond a simple wheel and axle.

For the longest time, it has been guided by questions of resemblance, both physical and intellectual, and that’s where Uncanny Valley comes in as a term. The title plays with the resonances of both words individually and together, the Uncanny as a psychoanalytical concept that has a long and important history and in aesthetics that is tied to technology. That goes back to Freud and his analysis of E.T.A. Hoffmann’s story The Sandman, which has been fundamental for the sort of machine imagery throughout the 20th century. Then on the technological and robotics side, the Uncanny Valley has been coined as a term to describe the human-machine relationship. In terms of our physical encounter with them, the experiential dimension, the thing that happens when you encounter a robot is that is maybe not quite or... just a little too perfect.

And that’s addressed in Stephanie Dinkins’s piece.

Those people who are familiar with the term uncanny valley will immediately recognize that discourse in this piece because it has persisted in technological circles but also in the popular imagination. You read a lot about the uncanny valley being this zone or terrain of discomfort that we experience when we encounter a humanoid robot, right? Building on that history, what this exhibition argues is that AI changes the terms of how we imagine and define the human-machine relationship. Because when we talk about robots, we talk about physical objects that we see. When we talk about AI, we’re talking about invisible mechanisms that we may not be aware of that reshape our relationship to the machine, and in doing so, it reshapes us.

Specifically, the social robot Bina 48 is modeled on a black woman, but without any understanding of black history. Dinkins shows how the industry, a homogeneous group lacking in diversity, is unprepared to successfully create technologies that represent diversity globally.

Maybe you can talk about some of the other works in the show.

The first installation you will encounter after the title wall and introductory graphics is The Doors by Zach Blas, and it literally functions as a portal into the Uncanny Valley. Zach Blas anchors us in a space that invokes the culture of Silicon Valley. Now there’s Anna Weiner’s book Uncanny Valley talking about her experiences there, so it's kind of a funny coincidence.

Oh, his installation is the artificial garden!

It literally is a sort of mental and physical environment that is deeply informed by 1960s counter-culture. It appropriates those attitudes or rituals like LSD. Whereas in the 60s, LSD was meant to give you a spiritual awakening and a more open mind, the current culture is more about micro-dosing and geared to enhancing productivity. These are the cultural and corporate conditions in which AI is being developed, so Blas surrounds you with a six-channel video installation, a contemporary psychedelic trip of sorts that include AI visuals that are akin to 60s light shows, as well as AI-generated poetry which takes its inspiration from The Doors.

Like Jim Morrison’s Doors?

Exactly, and with the crystalline lizard, it plays off of how Jim Morrison called himself the Lizard King. In biotech, the lizard is used for research in developing life-enhancing and regenerative technologies for the human body. So it’s tied to similar ideas like the technological developments that are geared towards transhumanism. As well as repurposing some dark, strong Jim Morrison, there are also instructionals from corporate mottos and logos that blend into this immersive environment. So you literally enter the Uncanny Valley, where entrepreneurial start-ups have transformed into capitalistic mega-corporations.

Then, as you walk through, the next encounter is Ian Cheng’s BOB, the Bag of Beliefs.

This is Ian Cheng’s digital simulation of a creature that is composed of what one could call six different algorithmic consciousnesses, where he plays with the possibility of simulating sentience and kind of uses our natural tendency for empathy and projection of sympathy onto small creatures to encourage us to interact with BOB because this is a creature who will be shaped by its interactions with us.

BOB is a Tamagotchi!?

Exactly, BOB is the algorithmic Tamagotchi whose evolution is dependent on the quality interaction it has with visitors. He’s always in a state of formation, constantly being reshaped.

So, he’ll change during the show?

Yeah, he will. If you’re lucky and he responds to your cooing and donations through the app, which is called The Shrine, he may appear on your app and actually leave the show and come to you. But his actions are unpredictable. Ian has invested him with what he calls a Congress of Demons, which are different drives that compete with one another.

Christopher Kulendran Thomas’s piece Being Human is the next gallery, right?

It’s a film/video installation, created in collaboration with Annika Kuhlmann, that unpacks the philosophical foundations and ideological legacies of humanism to examine the validity of our understanding of the human as an Enlightenment construct, set against the newly prosperous Sri Lanka (his family is Tamil) with a booming art market. It looks at the intersection, really the complicity, of global politics, the phenomenon of an international art market and AI, as well as an international type of technology that is being disseminated as part of a hegemonic system that has informed the formation of this new society in Sri Lanka. It also uses a deep fake of Taylor Swift, for example, to illustrate how sophisticated AI has become, and to what extent it can emulate or imitate our understanding of humanness by talking about authenticity and creativity.

Which are the last bastions…

Exactly, which is one of the factors that is often cited as the decisive distinguishing factor between humans and machines. If AI can emulate this so perfectly, maybe in ways that even exceeds our capacity, then what do we make of it? It’s really a big question.

“I think, therefore, I am” was a lot simpler!

Yeah, you’re still in the Descartes mode! So, what does it mean to be human? What are human rights in this scenario? And this leads us to another gallery where Forensic Architecture, the London-based collective, investigates human rights violations around the world. They have recently started using AI as a tool to enhance their research. An algorithm is a statistical model trained to do a certain thing, right? In this case, it was trained to find a hand grenade in millions and millions of photographs that were being searched online. Working with open-source, they created an algorithm that would be capable of finding this hand grenade in hundreds of thousands of images to aid their research. And so, because they only had very real concrete data like source images to work with, one of the things they did, which is common in machine learning, is to develop synthetic data to train the algorithm. So we’re showing both, the real data and the synthetic data.

The new project they're doing is called the Model Zoo, which is a meditation and introspection of algorithmic processes, as an opportunity to actually lay them bare for people who aren’t knowledgeable about how they work and are being trained and deployed, but also as an opportunity to sort of critique those mechanisms that are being unleashed in the social sphere.

Next is The Cage?

Simon Denny’s work is modeled on an unrealized Amazon patent for a worker’s cage. It embodies the dehumanizing tendency of automatic labor, and it’s related to an essay that analyzes the anatomy of Amazon’s ecosystem – and bottom line. It’s really a wake-up call in terms of pointing towards both the humanitarian and ecological consequences of the data economy. All these things we interact with on a daily basis, that we may think of as immaterial, have hugely material footprints in the world, both in terms of the kinds of labor they create and also the environmental consequences they produce. It’s really of a planetary scale. Simon has honed in on this pattern to realize this cage, which becomes the home of a virtual thorn bill bird (native to Australia) that tops the list of potentially extinct species. And so you can activate this bird which then flutters in the cage – and becomes literally the canary in the coal mine. It’s accompanied by four related collages. This calamity of consequences is something we don’t think about when we eagerly draw on our devices.

Agnieszka Kurant strikingly interprets this theme in her sculptures made of sand, gold, glitter, and crystals constructed by termites.

She has a group of works, and to a certain extent, they all deal with how AI reformulates labor. One work is called AAI, which is actually an acronym Jeff Bezos used to describe the ghost work behind the crowdsourcing Amazon Mechanical Turk. The work you are supposedly outsourcing to your AI is actually done by thousands of people around the world who perform these micro-tasks computers cannot do that are then assembled by AI into the product you’ve requested to see executed on the platform. On the one hand, her sculptures draw attention to the ghost work behind AI and on the other hand, addresses the fact that a lot of research and development of algorithms are based on natural modes of intelligence, primarily swarm intelligence. Whether it’s termites or bees, these natural metaphors have been pervasive within the mechanics of the machine learning world, and this shows the indebtedness of programming to biological phenomena.

Moving on to Trevor Paglen’s work is like moving from ghost work to ghost eyes.

With They Took the Faces From the Accused and the Dead..(SD18), Paglen investigates the biases built into data sets developed to train AI systems. The American National Standard Institute archived mugshots that were used without consent for classification and pattern recognition. His mosaic of thousands of images reveals the biases built into these systems.

After being kind of overwhelmed by Paglen’s deep research and revelation of the myth of neutrality, we meet social activist Chris Toepfer in Hito Steyerl’s installation The City of Broken Windows.

Yes, Toepfer is a war veteran who has dedicated his life, post-Iraq, to social work and helping people in traditionally underserved neighborhoods. Steyerl met him and, in thinking about this piece, arrived at this idea as a metaphor for a society in which technology facilitates the interests of the rich and powerful and ignores the interests of the underprivileged.

On one hand, you have this short video that follows Chris Toepfer around and documents his restorative work, which is painting. So the videos are presented on easels, which is an important detail because, of course, it speaks to the historical trope of painting as a window to another world, as well as a restorative measure against social injustice. Chris Toepfer’s activities directly tried to counteract the “broken window” theory, which presumes that a broken window invites fear and further violence (destruction invites destruction), which leads to predictive policing systems like PredPol, which have been criticized for being based on so-called 'dirty data'. Deployed into the algorithmic realm of statistical analysis, existing biases can be reinforced.

The second video is also short and crisp, and shows developers who are employed by a security company shattering windows, pane after pane, in an effort to collect recordings that train AI to recognize the sound of breaking glass, which is then deployed into security systems for home and building safety. So you have this broken glass metaphor that reverberates throughout and takes on different contexts – literally – as the sound will resonate through the gallery. As I said, the videos will be on easels, and they will be facing each other.

Lynn Hershman Leeson’s work is also a commentary on dependence on algorithms for policing, isn’t it?

The installation premiered at The Shed in New York, and I was very involved because we had always talked about it as a piece for this show. Shadow Stalker talks about the problematic aspects of deploying AI into the social sphere without a defined ethical framework or thought for the consequences. Specifically, it deals with PredPol, the police predictive system we talked about previously. It talks about the ease with which we volunteer information online and how that gets translated into actionable, tradable futures. It can completely manifest itself into something like predictive policing, where your zip code becomes who you are and the data profile defines you. So we interact with these platforms, each site with its own algorithm, creating a data profile - over which you have very limited control.

Martine Syms dabbles in loss of control in Mythicc being.

It’s a piece concerned with issues of race and gender bias in general, and within the context of AI, in particular. It consists of a vinyl graphic modeled after what's called a 'threat model', which is a path mapped out to control the security of a network. Basically, an instruction the threat model will show you the vulnerabilities in your computer and software, except she applies the threat model here to herself. On top of that threat model is a monitor that shows a video called Mythicc being, which is her alter-ego, Teeny, the anti-Siri. Syms likes to talk about her work as her inversion of the servility of Siri and Alexa, who are feminized and gendered. Why are all of these home assistants female voices, why are they Alexa or Siri? It goes back to the stereotypical genderization of servitude right? So she plays with that, basically a hostile, self-centered Siri. You can ask her questions and she will send back reflections revolving around social justice and representations of blackness and femininity in Technology.

Did you notice that artists from various regions or disciplines have varying viewpoints or themes? Most of them seem to be born in the 80s.

I think it’s too easy to pinpoint the diversity of interests driving the individual artist’s projects by their place of origin. This is not an exhibition about identity politics, after all. Of course, personal interests and belief systems always come into play and will determine where an artist will focus on inquiry. If anything is being questioned across the board, then it is whether or not the humanist worldview, which is fundamentally Western, is still a valid framework about what it means to be human.

While movies like Planet of the Apes and 2001: A Space Odyssey are very popular, they're not necessarily reassuring. In some way, we are frightened of AI and, at other times, acquiescent. How did curating this show enlighten or surprise you?

After three years of intensive research, I now have an understanding, which eluded me prior, of AI both as technology and fantasy. And I know it’s only the beginning, since this is a rapidly evolving field which is having a hugely transformative impact on our future. Curating this show has taught me that I will not take my eyes off the ball.

Uncanny Valley: Being Human in the Age of AI shows at the Fine Arts de Young Museum in San Francisco February 22 through October 25, 2020.